Project introduction and background information

Many BSc Soil, Water, and Atmosphere (BSW) students at Wageningen University submit assignments without critically evaluating their own work. Lecturer observations and course evaluations consistently show that students either lack the attitude for honest self-assessment or the skill to judge their work against clear standards — limiting their confidence, peer-feedback quality, and independent learning.

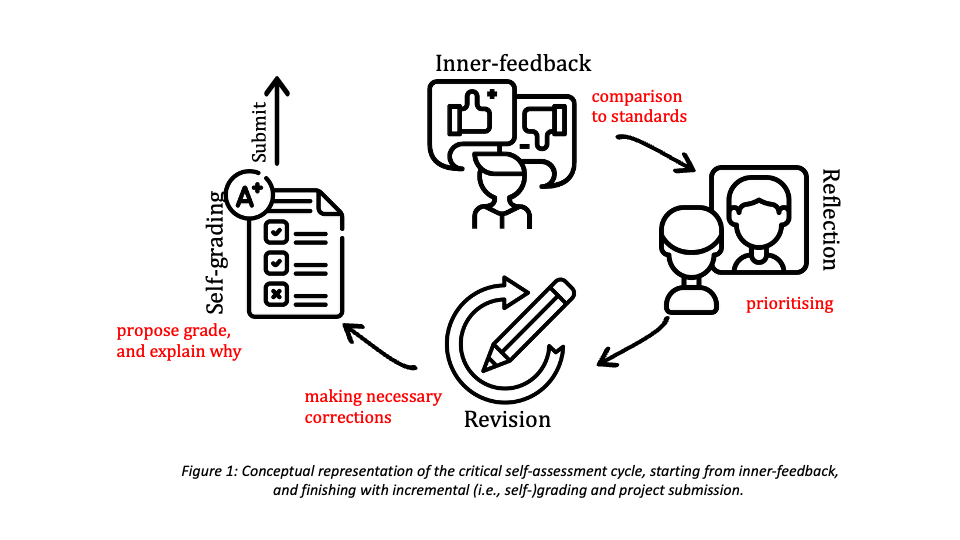

This project embeds a four-step self-assessment cycle — inner-feedback, reflection, revision, and incremental grading — into strategically selected BSW courses. Drawing on established concepts from higher education research, the cycle gives students structured opportunities to evaluate and improve their work before submission. The goal is to develop reflective, self-reliant learners who can honestly assess their own performance, a skill that extends well beyond the classroom into professional and personal life.

Objective and expected outcomes

Objective To develop critical self-assessment as a core competency in BSc Soil, Water, and Atmosphere (BSW) students by embedding a structured four-step self-assessment cycle into selected courses across the programme, strengthening formative assessment, personal leadership, and reflective learning.

Approach

Theoretical foundation

- The project builds on two established concepts in higher education: inner-feedback (Nicol, 2020) — the knowledge students generate when comparing their own performance against reference standards — and incremental grading (Köppe et al., 2020) — a student-driven assessment approach in which students grade their own work using defined criteria

- Although both methods are known and have been practiced individually at WUR, this project is the first to combine them into a structured longitudinal cycle embedded across an entire BSc programme

The four-step self-assessment cycle

- Inner-feedback — students evaluate their work or knowledge against clearly defined criteria (rubrics, checklists, learning outcomes, good practice examples), and identify what needs to improve

- Reflection — students analyse their points of improvement, prioritise them based on importance and effort, and decide on concrete next steps

- Revision — students implement improvements and decide whether to submit their work or return to the inner-feedback step for another round

- Incremental grading — students propose their own grade based on defined criteria; teacher feedback focuses primarily on differences between the student's and the lecturer's assessment

By integrating these methods, the project strengthens student-centred education and formative assessment, fostering reflective, self-reliant scientists who can critically evaluate their work and collaborate effectively in real-world contexts.

Implementation

- Design a toolset of rubrics, checklists, exam-grade expectation definitions, and good practice examples, tailored to different courses and year levels.

- Implement the cycle in strategically selected BSW courses, starting with one assignment per course for first-year students and gradually increasing in complexity as students progress through their degree.

- Work in close collaboration with course coordinators, lecturers, and education innovation staff throughout design and implementation.

Results and learnings

What the project accomplished:

The Honest Mirror project has been fully implemented in two courses at Wageningen University:

- Learn the Scientific Method in a Changing Climate (MAQ52806) — a research-skills course in which students evaluate their own writing and scientific reasoning across two assessment moments: a proposal (week 4) and a final report (week 8)

- Atmosphere-Vegetation-Soil Interaction (AVSI) — a content-knowledge course in which students evaluate their own understanding across three consecutive exam moments: two midterms and a final exam

In both courses, students proposed their own grade at each assessment moment alongside a short written justification, which was then compared to the grade assigned by the examiner.

Key results

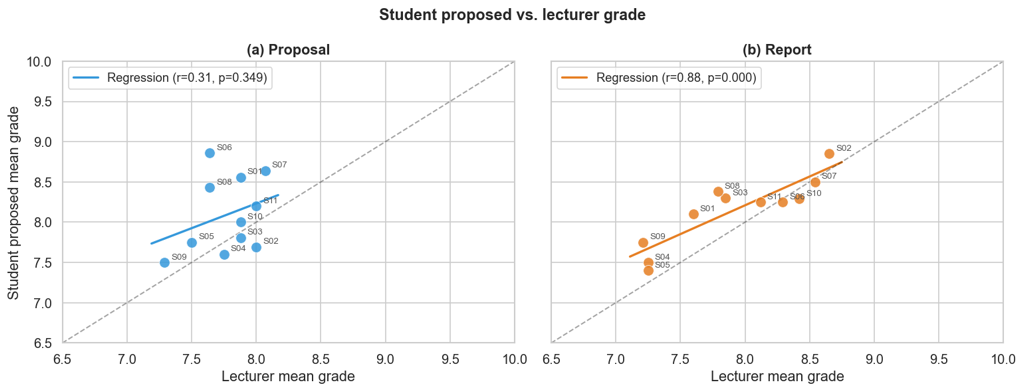

The method's results from the scientific method course are presented in Figure 2, in a form of scatter-plot where x-axis displays examiner's grade while y-axis displays students' proposed grade. Left figure depicts grades of students and examiner proposed in the early stages of the course (i.e., the proposal phase from week 4 of the course), while right figure depicts the grades proposed at the end of the course (i.e., the report phase from the week 8 of the course).

Figure 2: Alignment between student-proposed and lecturer-assigned grades in the Proposal (a) and Final Report (b). Each point represents one student (n = 11). The coloured line shows the linear regression; the dashed line represents perfect agreement. Pearson correlation coefficients and significance levels are reported in each panel.

Figure 2 shows the relationship between student-proposed and lecturer-assigned mean grades for the Proposal (panel a) and the Final Report (panel b). In the Proposal phase, no significant correlation was found between student and lecturer grades using the Pearson correlation test (r = 0.31, p = 0.349), indicating that students' initial self-assessment ability varied widely and was largely unrelated to the quality of their work as judged by the examiner. Several students substantially overestimated their performance (e.g., S01, S07, S08), while others underestimated it (e.g., S02, S03), with individual discrepancies reaching up to 20% of the grade scale. By the final report (Figure 2b), the correlation increased markedly and became statistically significant (r = 0.89, p < 0.001), with the mean bias decreasing from 0.32 to 0.24 grade points. This convergence suggests that repeated engagement with self-assessment cycles — including peer-feedback, structured reflection, and iterative revision — helped students calibrate their judgement against formal academic standards. The progression supports the role of integrative self-assessment in fostering student-centred learning and formative assessment. The improved alignment suggests growing confidence in evaluating their performance, while also highlighting that structured reflection can reduce grade inflation or deflation tendencies. The progression demonstrates the value of integrating self-assessment into multiple stages of coursework, reinforcing its role in fostering student-centred learning, personal leadership, and supports formative assessment goals.

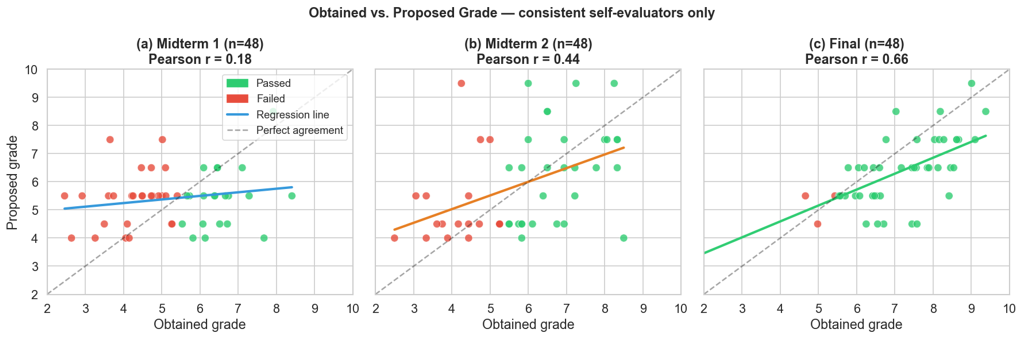

To investigate whether students' ability to accurately assess their own performance improves over the course of the semester, we analysed the relationship between obtained and student-proposed grades across three consecutive assessment moments (see Figure 3). Only students who completed a self-evaluation at all three time points were included in this analysis (n = 47), ensuring that the observed trends reflect genuine development in self-assessment behaviour rather than differences in cohort composition between exams. This approach allowed us to distinguish between self-assessment as a skill — the ability to judge one's own performance — and self-assessment as a reflection of content knowledge, since the same students were tracked as their familiarity with the course material grew.

Figure 3: Development of self-assessment accuracy across three examination moments in AVSI (cohorts 2024 & 2025). Scatter plots showing the relationship between obtained grades and student-proposed grades for Midterm 1 (a), Midterm 2 (b), and the Final exam (c). Dot colour indicates pass/fail status (green: ≥ 5.5; red: < 5.5). The coloured line shows the linear regression; the dashed line represents perfect agreement. Pearson r increased from 0.18 (p = 0.228) to 0.44 (p = 0.002) to 0.66 (p < 0.001) across the three exams.

Figure 3 shows the correlation between obtained and proposed grades for this consistent subgroup. In Midterm 1, no significant correlation was found using Pearson correlation test (r = 0.18, p = 0.228), indicating that students were largely unable to assess their own performance at this early stage of the course. By Midterm 2, a moderate and statistically significant correlation had emerged (r = 0.44, p = 0.002), suggesting that students began to develop a more accurate sense of their performance after receiving feedback from the first exam. This trend continued through the Final exam, where a strong and highly significant correlation was observed (r = 0.66, p < 0.001), indicating that by the end of the course students had substantially improved their ability to judge their own performance.

Taken together, the results from both courses point to the same conclusion: self-assessment accuracy is not a fixed trait but a skill that improves with structured, repeated practice.

Lessons learned

- The cycle works, but takes time. Improvement in self-assessment accuracy was not immediate — it developed gradually across multiple assessment moments. This confirms that self-assessment needs to be embedded at multiple points within a course, not introduced as a one-off activity.

- Calibration differs by context. In the project-based course, where students had rubrics, peer-feedback, and revision opportunities, alignment improved faster and reached a higher level (r = 0.89) than in the exam-based course (r = 0.66). This suggests that the richer the self-assessment toolset, the more quickly students calibrate.

- Students benefit from understanding the gap. Focusing teacher feedback on the difference between student-proposed and examiner-assigned grades — rather than only on the grade itself — proved to be a powerful lever for reflection and improvement.

- Transparency of criteria matters. Students who had access to clear rubrics and grading criteria were better positioned to evaluate their own work honestly. Investing time in co-designing or explaining criteria upfront is essential.

Recommendations

Embed self-assessment at multiple points within a course rather than as a one-off activity — our data show that accuracy improves gradually across repeated moments, not immediately. Provide students with clear criteria upfront: rubrics and grading guidelines are essential for honest self-evaluation. Focus teacher feedback on the gap between student-proposed and examiner-assigned grades, as this drives reflection more effectively than grade feedback alone. Finally, introduce the self-assessment cycle early in the programme and increase its complexity across study years, giving students time to develop the attitude and skill needed to become genuinely self-reliant learners.

Practical outcomes

The Honest Mirror self-assessment cycle has been implemented in several courses at Wageningen University. In the Learn the Scientific Method in a Changing Climate course, students now propose and justify their own grade at two assessment moments — the research proposal and the final report — with examiner feedback explicitly addressing the difference between the two assessments. In the Atmosphere-Vegetation-Soil Interaction course, students self-evaluate their knowledge after each of three exam moments, tracking their own development across the course. In the Integration course Soil, Water, Atmosphere (MAQ11809) students evaluate their writing, presenting, and collaborating skills using rubrics and questionnaires. Additionally, in the Fundamentals of Landscapes (SGL24306) and Inter-University sustainability challenge (MAQ52306, EWUU) students evaluate themselves at two moments using self-assessment questionnaire and every week using the participation rubric.

A toolset of rubrics, grading guidelines, and self-assessment templates has been developed and is currently in use across both courses. Data collected from both implementations are being analysed for a peer-reviewed publication, which will make the approach and its outcomes accessible to the broader higher education community. Discussions are ongoing with other BSW programme coordinators about extending the cycle to additional courses in the programme.